代码:

1 | #!/usr/bin/env python |

执行结果:

1 | Epoch 4/5.... Discriminator Loss: 0.4584.... Generator Loss: 4.8776.... |

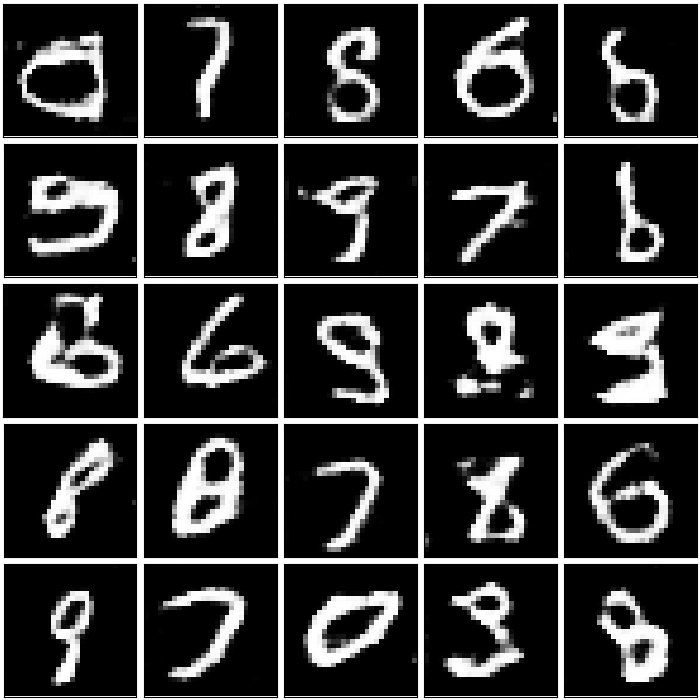

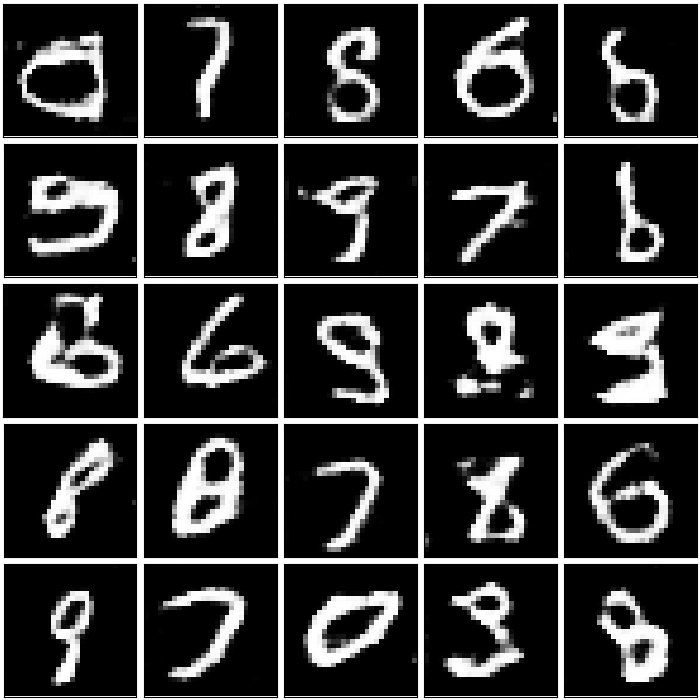

生成的图像:

转载请注明:Seven的博客

1 | #!/usr/bin/env python |

1 | Epoch 4/5.... Discriminator Loss: 0.4584.... Generator Loss: 4.8776.... |

转载请注明:Seven的博客

本文标题:TensorFlow实现深度卷积生成对抗网络-DCGAN

文章作者:Seven

发布时间:2018年09月04日 - 00:00:00

最后更新:2018年12月11日 - 22:11:58

原始链接:http://yoursite.com/2018/09/04/2018-09-04-TensorFlow-DCGAN/

许可协议: 署名-非商业性使用-禁止演绎 4.0 国际 转载请保留原文链接及作者。

微信支付

支付宝